Illustrated by Jeff Prymowicz

Network penetration testing has traditionally been a highly manual discipline. Even with mature open-source tools such as Nmap, Metasploit Framework, BloodHound, netexec, along with modern commercial security platforms, like Nessus or Core Impact, the most important part of a successful engagement has always been human analysis. A tester scans the environment, reviews large volumes of output, identifies promising leads, and chains together multiple weaknesses to demonstrate real impact.

AI is beginning to augment that workflow. Instead of manually reviewing scan results and deciding what to investigate next, LLM-assisted systems can ingest tool output, suggest follow-up actions, and help construct potential attack paths across a network. The technology can meaningfully accelerate analysis, but it does not eliminate the need for technical expertise or established testing methodologies.

AI as an orchestration and reasoning layer

Most AI-driven penetration testing systems are not scanners or exploit frameworks themselves. Tools such as PentestGPT, PentAGI, and similar research prototypes act primarily as orchestration layers. They sit on top of traditional security tooling and use an LLM to assist with reasoning and workflow.

Some emerging systems, like RapidPen, attempt to go further by automatically executing reconnaissance and enumeration commands and feeding the results back into the model. However, these autonomous workflows are still best treated as supervised automation rather than fully independent penetration testing.

In practice, the workflow looks like this:

- Deterministic tools perform discovery (for example port scanning, service detection, or authentication enumeration).

- The AI system analyzes the output and identifies potential areas of interest.

- The orchestrator selects the next action based on the model’s suggestion.

- Results are fed back into the system for the next iteration.

In traditional testing workflows, this loop is typically driven entirely by the tester:

scan → analyze → plan → execute → analyze

With AI-assisted tooling, parts of the analysis and enumeration process can be partially automated, resulting in a workflow that looks more like:

scan → AI analyze → auto-enumerate → AI correlate → tester decision

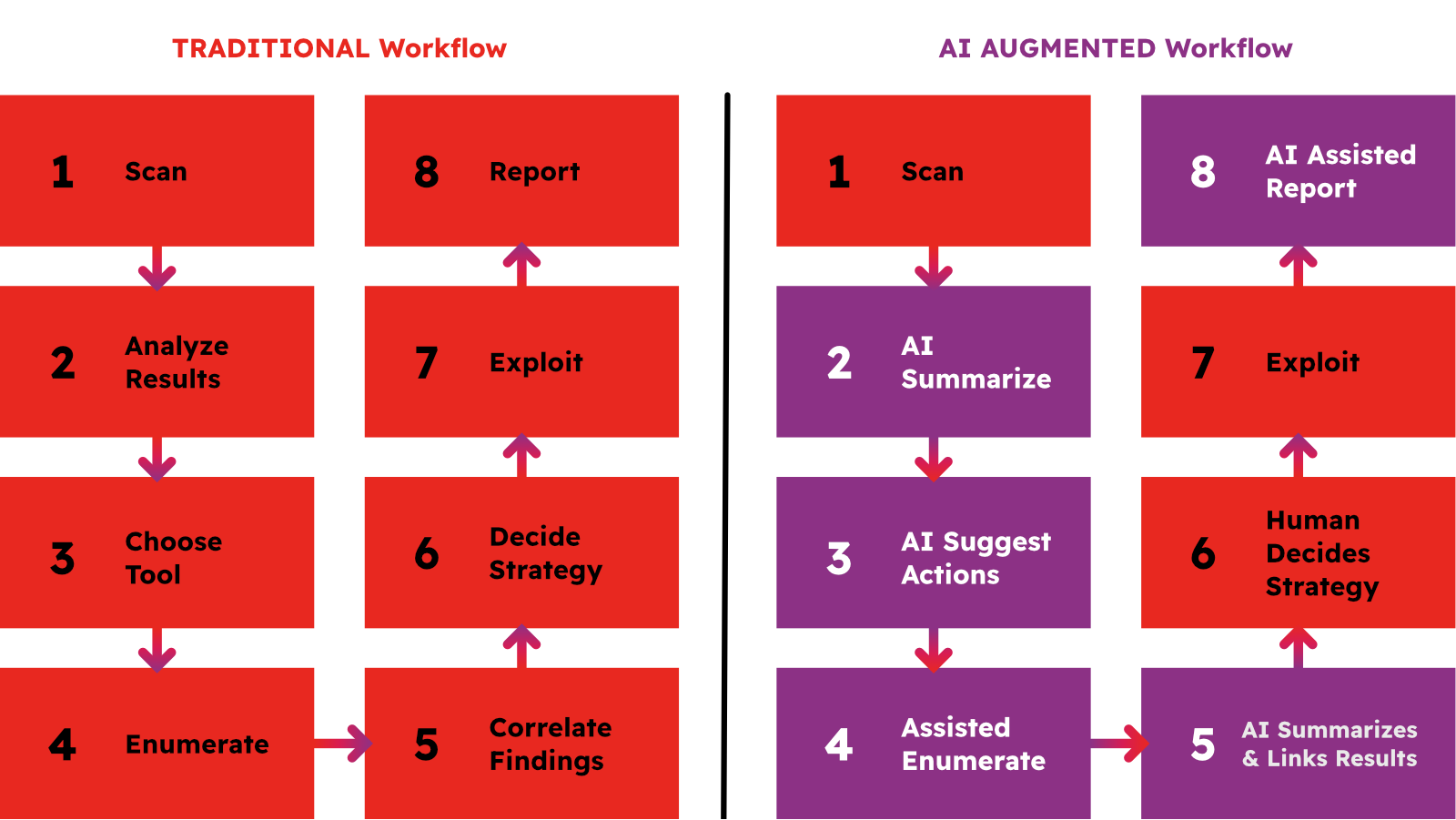

Traditional:

Scan → Analyze results → Choose tool → Enumerate → Correlate findings → Decide strategy → Exploit → Report

AI-augmented:

Scan → AI summarize → AI suggest actions → Assisted enumeration → AI summarizes and links scan results → Human decides → Exploit → AI assist reporting

The LLM is not replacing existing tools. Instead, it is assisting with the decision-making process that normally happens during manual testing, such as identifying which services warrant deeper investigation or which enumeration techniques should be attempted next.

Where AI can help

AI-assisted workflows are particularly useful when dealing with large volumes of reconnaissance data.

Large-scale output analysis

Enumeration tools generate extensive output: port scans, service banners, directory listings, authentication responses, and configuration data. LLMs can summarize this output and highlight anomalies or potentially vulnerable services.

It is worth noting that deterministic scanners have provided similar capabilities for years. Platforms such as Nessus maintain large vulnerability signature databases that map service versions to known vulnerabilities and exploitation guidance. For well-understood vulnerabilities, these rule-based systems remain extremely effective.

Where LLMs can add value is in correlating information across multiple sources, for example linking scan results, service configurations, and authentication responses to suggest follow-up testing steps.

Attack path analysis

In complex enterprise environments, exploitation often requires chaining together multiple misconfigurations. Tools such as BloodHound already perform graph analysis of Active Directory environments and provide prebuilt queries that help identify potential privilege escalation paths.

LLMs do not replace these graph-based techniques. Instead, they can automate the interpretation of those results. For example, an AI-assisted workflow might analyze BloodHound output, identify a possible privilege escalation chain, and suggest the commands required to test each step of the attack path.

Command generation and workflow automation

AI systems can also help generate commands for commonly used tools and maintain context across multiple steps of an engagement. This can streamline repetitive tasks such as enumeration or documentation of testing activity.

Limits of Automation

Despite these capabilities, fully autonomous network penetration testing remains impractical in most real-world environments.

Enterprise networks contain complex trust relationships, legacy systems, and specific authentication flows that require careful analysis. While LLMs can reason about structured data and relationships between systems, they often lack the environmental awareness needed to reliably execute long attack chains involving lateral movement, privilege escalation, and persistence.

The examples below illustrate why human oversight still remains essential:

Stealth and detection

Automated systems often assume they can freely scan or enumerate a network. In practice, aggressive activity can trigger intrusion detection systems, endpoint monitoring, or account lockout policies. Human testers routinely adjust tactics — slowing scans, changing enumeration techniques, or blending activity with legitimate traffic — while automated workflows typically lack this operational awareness.

Pivoting and Network Segmentation

Enterprise networks are rarely flat. Segmentation through firewalls, VLANs, and jump hosts requires testers to pivot through compromised systems or establish tunnels to reach additional network segments. Coordinating these multi-hop attack paths remains difficult for current AI-driven systems.

Authentication complexity

Multiple attacks depend on subtle behaviors in protocols such as Kerberos or NTLM. Techniques such as delegation abuse or relay attacks often rely on implementation details that vary between environments. While LLMs can reason about these mechanisms, reliably exploiting them across diverse systems still remains challenging.

Risk of service disruption

Autonomous testing workflows can introduce operational risk if not carefully controlled. Penetration testing activities, especially exploitation attempts, authentication brute forcing, or aggressive scanning can cause service instability, trigger account lockouts or disrupt production systems.

In traditional engagements, testers deliberately pace their activity and assess the potential impact of each action before proceeding. Automated systems, by contrast, may execute sequences of commands without understanding their operational consequences.

More advanced agent-based systems that are capable of executing actions beyond scanning, such as modifying configurations, uploading payloads, or interacting with exposed services introduce additional risks. If insufficiently constrained, such systems can perform destructive actions, including overwriting files, modifying system configurations, or deleting data.

While the risk is not unique to AI, the increased speed and autonomy of these systems can amplify the potential impact if safeguards are not in place.

Additionally, security teams should also be cautious when forwarding penetration testing artifacts to external AI services, such as Claude Code or ChatGPT. Internal assessments frequently uncover sensitive information such as credential material, Active Directory structures, or data stored on misconfigured systems. Automatically transmitting this information to external LLM providers for analysis may expose regulated data outside the organization’s security boundary and could conflict with internal data handling policies or regulatory frameworks such as GDPR, PCI DSS, or HIPAA.

Conclusion

AI-assisted network testing represents a useful evolution of existing workflows. It can help to analyze and process large volumes of data more efficiently, identify relationships within complex environments, and automate repetitive parts of the testing process.

However, the core mechanics of penetration testing remains unchanged: deterministic tools perform the scanning, skilled practitioners interpret the results and guide the testing strategy, and human expertise ultimately determines whether a vulnerability can be safely and reliably exploited.

The most effective security teams will treat AI as an accelerator and not as a substitute for technical skill and established testing practices.

About the Author

Robert is a Senior Security Engineer at Cloud Security Partners. He has worked in the IT security field since 2009. His primary focus has been in offensive security. Robert specializes in application and network penetration tests, cloud security audits, and red team engagements. A curious mindset along with multiple years of hands-on pen testing experience has allowed Robert to effectively identify and exploit security vulnerabilities.

In his spare time, Robert enjoys working out, mountain trekking, and playing indie/RPG video games.

Stay in the loop.

Subscribe for the latest in AI, Security, Cloud, and more—straight to your inbox.