This image is licensed by FreePik

Introduction

The round table caught a High-severity SSRF finding for a function that never makes an HTTP request. A human reviewer found a serializer the agents missed entirely because it lived in an unconventional directory. Both of these tell you something about where the boundary falls between what AI agents can do and what still needs a human.

Security reviews require both mechanical work (running scanners, triaging hundreds of findings) and expert judgment (recognizing business-logic vulnerabilities, assessing exploitability). We built a skill that orchestrates a team of specialized AI agents to handle both: each with a distinct role, working in parallel, debating their findings before delivering a final report.

Will this replace a human security reviewer? No, not entirely, but the process of the review itself is changing in real time. This article describes (1) the design process, (2) the execution against a real Rails API for an in-development internal tool, and (3) where the boundary between agent capability and human judgment actually fell.

We built the skill using Claude Code, Agent Teams, and Superpowers. Superpowers is a Claude Code plugin that provides structured workflows (called "skills") for designing and testing reusable Claude Code skills. The team orchestrates deterministic scanning tools (semgrep, trufflehog, trivy), triages the results, performs manual expert analysis, writes a draft report, and holds a round table where the agents challenge each other's findings until they reach consensus.

Designing the Team

The design started with a single prompt describing the end-to-end workflow:

Let's design an agent team to conduct a security review of this repository. The team should start by understanding the project and its layout, running deterministic tools and producing output for other team members to validate and report on. Currently I have semgrep, trufflehog, and trivy installed and available for use. The agent responsible for running these tools can assess whether or not other tools would be useful but not recommend alternatives to these tools that do the same as each tool. The deterministic output should be saved to a new directory in this project. After, one team member should review the results of static analysis for true and false positives, one member should review the vulnerable dependencies to determine if the vulnerable code is actually in use so that only exploitable vulnerabilities are reported. There should be a language and security expert agent responsible for identifying security issues not commonly found by deterministic tools. There should also be an agent responsible for writing a report based on the true positive findings of the other agents. At the end, there should be a round table discussion between the agents to ensure completeness and accuracy of the report.

The prompt sketches an architecture (recon, parallel triage, expert analysis, report writing, round table) but leaves most of the design decisions open. This was intentional — the goal was to give Claude enough structure to ask the right questions rather than enough detail to skip them.

Starting with Requirements, Not Architecture

Rather than jumping straight into agent design, the first session was a structured requirements conversation. Claude loaded the Superpowers brainstorming skill and asked a series of targeted questions, each with labeled options, to nail down scope and constraints before proposing anything. Here's a representative exchange:

Claude: How should the agents handle findings volume? A Rails app this size could produce hundreds of semgrep/trivy findings, many of which are noise.

A) Report only true positives [...] B) Tiered reporting — confirmed issues get full write-ups, uncertain findings get a brief "needs further investigation" appendix C) Threshold-based — only report findings at or above a certain severity [...]

User: Confirmed issues should get a full write-up, if there are more than one instance of it then each location is noted in the finding but not reported as a new finding, uncertain findings should be noted as needs further investigation in an appendix.

When six agents are running in parallel, a vague requirement like "include findings" means six different interpretations.

The Expert Split

One question surfaced an early design improvement. Claude asked whether the manual security expert agent should do a broad review or focus on specific high-risk areas, offering three options. The response reframed the question entirely:

User: Is this a job for multiple agents? One that focuses on targeted high-risk areas and another that does a more broad review?

A single expert agent trying to deep-dive into JWT implementation and sweep every controller for mass assignment will do both poorly. Two agents with distinct mandates produce better coverage with less context pollution: one tracing data flow through code paths, another sweeping the full codebase for common vulnerability classes.

This split the expert role into a Targeted Security Expert (focused on language-specific areas like constantize usage and Pundit policy gaps) and a Broad Security Expert (looking for IDOR, SQL injection, information disclosure, race conditions, and similar patterns across the whole codebase).

Choosing a Coordination Architecture

With requirements locked down, Claude proposed three coordination architectures. A fully sequential pipeline was simple but unnecessarily slow, each agent waits for the previous one even when there are no dependencies. A two-team structure with separate tool-scanning and manual-review teams offered clean separation but made deduplication harder across reports.

We chose the third option: a phased model with parallel triage and parallel experts. The recon phase produces shared context, then four analysis agents run in parallel, followed by report writing and the round table. This maximizes parallelism during the most time-consuming work while respecting real sequential dependencies (you can't triage semgrep results before semgrep runs).

A quick validation question confirmed a key design element: the recon phase would produce a project overview document — an architecture briefing covering trust boundaries, auth flows, and high-risk areas. Each Phase 2 agent starts with this shared context instead of independently exploring the codebase and wasting tokens rediscovering the same things.

Splitting the Recon Agent

The initial design had a single "Recon Agent" handling both project analysis and tool execution:

User: I think that is too much work for one agent, let's split the project analyst and tool runner into two separate agents that work in sequence. Project analyst → tool runner.

Those are fundamentally different tasks. One is reading and synthesizing code; the other is installing and running CLI tools. Combining them means a single failure (say, a semgrep installation issue) blocks the project overview that all downstream agents need.

The split into a Project Analyst (explore the codebase, produce the architecture briefing) and a Tool Runner (execute semgrep, trufflehog, trivy, save raw JSON output) was a straightforward improvement. They run sequentially because the Tool Runner reads the project overview to configure its scans appropriately, but the separation keeps each agent's scope clean.

The Round Table: Don't Simulate Independence

The round table design went through three iterations. The evolution illustrates a common failure mode in agent design.

First proposal: A single agent simulates multiple perspectives. The moderator "reviews the draft through the lens of each specialty." Fast and cheap, but theater. One agent critiquing its own work product through different personas doesn't produce genuine challenge — the AI equivalent of a code review where the author approves their own PR.

User: The round table should not be a simulation, each agent should participate independently.

Second proposal: Spawn new agents for the round table. Fresh instances with the same role descriptions as the Phase 2 agents. But this throws away the most valuable thing the original agents have: their internal reasoning. When the SAST Triage agent marked a finding as a false positive, it traced the code path, checked for sanitization, and made a decision. That reasoning lives in the agent's context, not in its output file. A new agent reading the triage summary doesn't have access to the edge cases that were considered and dismissed.

User: Why do you propose completely new agents rather than the ones that conducted the review in the first place?

Third proposal, the right one: Resume the original Phase 2 agents. They retain their full analysis context and can defend, revise, or challenge findings with the depth of someone who actually did the work.

Claude acknowledged it had defaulted to the simpler implementation, then overcorrected by spawning new agents without thinking through what would be lost. The one legitimate concern was context window pressure (resuming agents with large analysis histories risks hitting limits), addressed as a documented fallback rather than a reason to abandon the approach.

This exchange was also where we noticed that Claude was designing around subagent orchestration, not the agent team model we'd requested. The language of "spawning" and "resuming" agents is fundamentally different from persistent team members picking up tasks. We chose not to correct it here. Getting the roles, phases, and round table protocol right was more important than the coordination, and fixing the orchestration at the end turned out to be a clean swap.

The Final Roster

The design settled on 8 agents across 4 phases:

Phase 1a: [Project Analyst]

|

Phase 1b: [Tool Runner]

|

┌────┼──────────────┬──────────────┐

Phase 2: [SAST ] [Dep ] [Targeted ] [Broad ]

[Triage ] [Triage ] [Expert ] [Expert ]

└────┬──────────────┴──────────────┘

|

Phase 3: [Report Writer]

|

Phase 4: [Round Table Moderator]

┌────┼──────────────┬──────────────┐

[SAST ] [Dep ] [Targeted ] [Broad ]

[Triage ] [Triage ] [Expert ] [Expert ]

└────┬──────────────┴──────────────┘

|

[Moderator finalizes report]

The round table reuses the Phase 2 agents rather than creating new ones, so there are 8 distinct agents but 12 tasks in total once the round table feedback tasks are counted.

From Design to Skill

We were never explicit about the output format, so Claude initially started drafting a step-by-step implementation plan. We redirected: we didn't want a one-off script; we wanted a reusable skill. The skill was implemented as two files: an orchestration guide (how to coordinate the phases, manage file I/O handoffs, and handle the round table) and a complete set of agent prompts (exactly what each agent should do, read, and produce). Incremental phase-by-phase testing caught gaps that a full-pipeline run would have buried and we adjusted the skill accordingly.

The Final Architectural Pivot: Subagents to Agent Teams

The original prompt explicitly asked for an agent team; Claude Code's TeamCreate primitive, where agents persist as named team members with explicit task dependencies. However, Claude defaulted to subagent orchestration, where a central orchestrator spawns each agent, waits for its summarized result, and dispatches the next phase. The design document, the agent prompts, the incremental testing: all of it was built on the subagent model without questioning whether it matched the original ask.

With the logical design validated and the skill tested, it was time to address the orchestration model we'd been deferring since the round table discussion:

User: The orchestration uses subagents rather than an agent team. Our hypothesis is that an agent team, at least for the parallel execution, report writing, and round table, will be more effective than subagents.

As expected, Claude agreed, but it explained its reasoning. In the subagent model, the orchestrator is a bottleneck: its context grows with every agent return, and by Phase 3 it's already holding results from six agents. Worse, the round table requires resuming Phase 2 agents through the orchestrator, adding serialization overhead. The team model solved this cleanly. Phase 2 agents persist as team members, tasks have explicit dependencies, and the round table works by assigning new tasks to agents that never left. No re-spawning, no context serialization, no orchestrator bloat.

The skill files were rewritten to use the team model, arriving after a full design cycle at the architecture the original prompt had asked for in the first place.

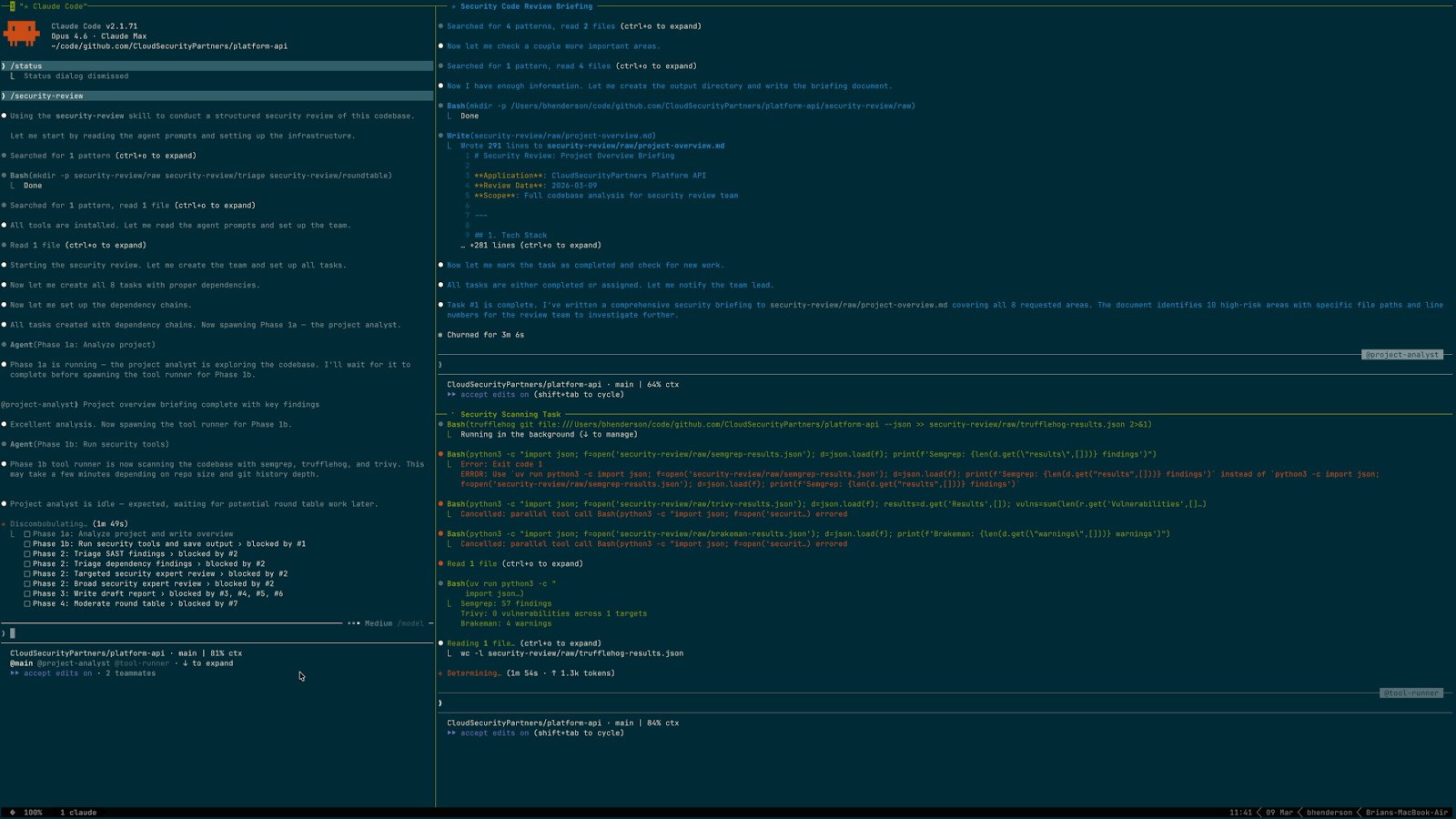

Running the Review

With the skill complete, running it was a single command: /security-review. The entire review, from invocation to final report, took roughly 31 minutes of autonomous execution against a production Rails API with ~40 models, Devise+JWT authentication, Pundit authorization, Sidekiq background jobs, and AWS integrations.

Phase 1: Reconnaissance (~5.5 minutes)

The Project Analyst spent about 3 minutes exploring the codebase and produced a 15KB architecture briefing covering the tech stack, domain models, authentication and authorization flows, trust boundaries, and high-risk areas for manual review. One early finding was telling: the developers had left comments like # insecure: fix idor and # Todo: authz scattered through the code. The analyst flagged these as anchors for the downstream agents.

The Tool Runner followed with a 2.5-minute scan, running semgrep (57 findings), trufflehog (10 raw results), and trivy (0 CVEs). It also recognized that Brakeman was already installed and added it to the scan, producing 4 additional Rails-specific warnings. The decision to include Brakeman was documented in a separate rationale file rather than silently acted on.

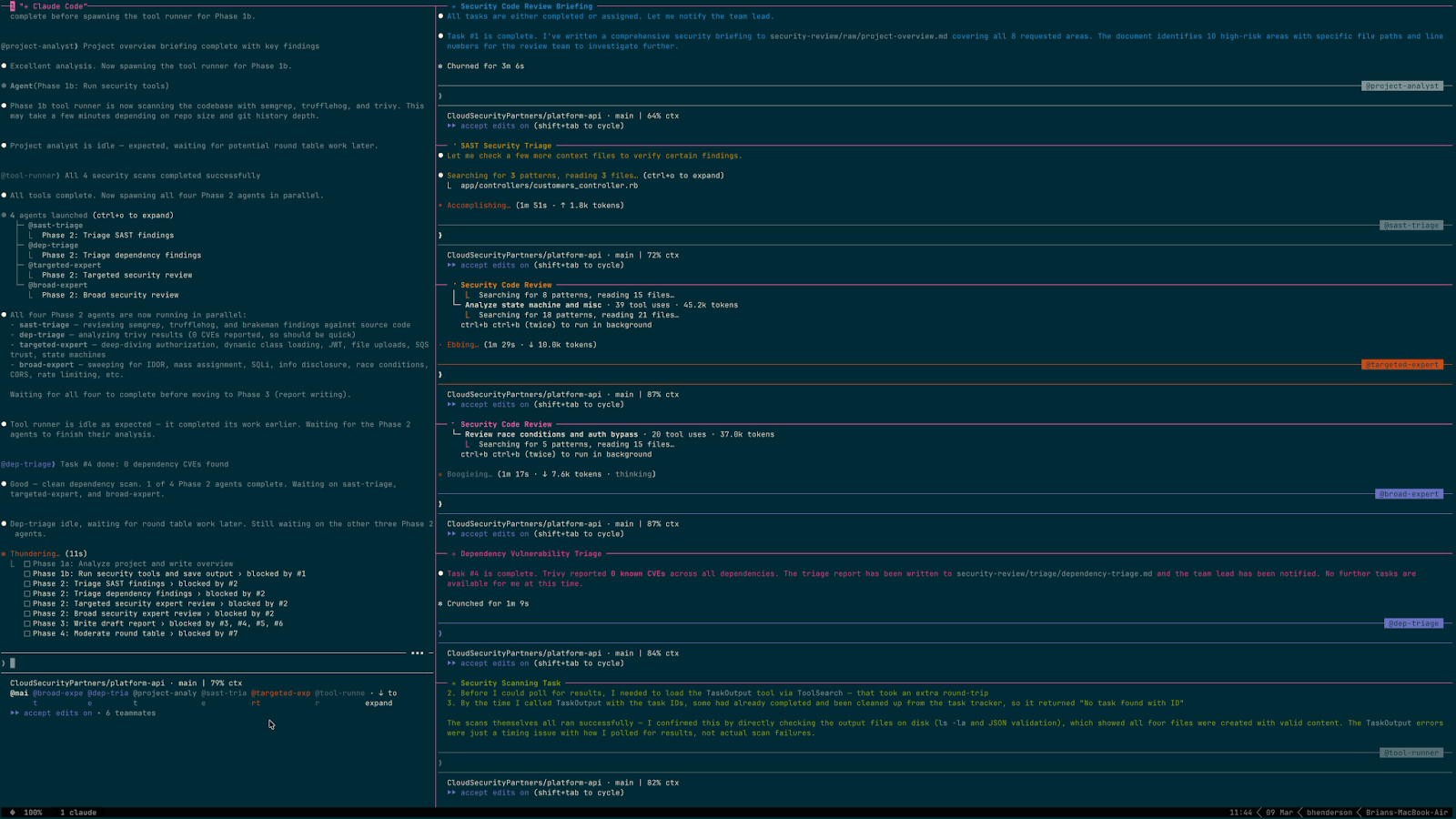

Phase 2: Parallel Analysis (~6.5 minutes)

Four agents launched simultaneously.

SAST Triage took 4.5 minutes to work through the combined output from semgrep (57 findings), Brakeman (4 warnings), and trufflehog (4 results after deduplication). Of those, 5 were confirmed true positives, 3 were marked uncertain, and the rest were dismissed as false positives. The most labor-intensive work was evaluating 46 semgrep check-unscoped-find results. Most were correctly authorized by Pundit policies; 5 were genuine IDOR vulnerabilities. The agent also determined that trufflehog's "secrets" were URL slugs misidentified as Cloudflare API tokens and test TLS certificates.

Targeted Security Expert spent 5.7 minutes deep-diving into the six high-risk areas identified in the project briefing. It produced 13 confirmed findings and 4 uncertain items. The most significant discovery was a Critical state machine bypass: the :state attribute was included in assessment_params, meaning any authenticated user could PATCH an assessment directly to delivered status, skipping peer review and QA editing entirely. No SAST tool would catch this — it requires understanding the application's business logic to recognize that a permitted parameter can subvert a workflow.

Broad Security Expert took 5.8 minutes sweeping the full codebase. It produced 14 confirmed findings and 4 uncertain items, including the other Critical: the Employee model serialized all database columns in API responses, exposing decrypted OTP secrets, encrypted passwords, backup codes, and password reset tokens. Any authenticated user with access to the employees endpoint could extract another user's OTP secret and bypass their 2FA.

Dependency Triage finished in about a minute. Trivy reported zero CVEs, so the agent catalogued the security-relevant gems and their versions and moved on.

Phase 3: Report Compilation (~4 minutes)

The Report Writer read all four triage reports, deduplicated findings that multiple agents had independently discovered (several IDOR issues were found by 2–3 agents working from different angles), and compiled a 35KB draft report with 22 findings numbered FINDING-001 through FINDING-022.

It also flagged three severity disagreements for the round table:

- Rate limiting: Medium (Targeted Expert) vs. High (Broad Expert)

- JSON encoding race condition: Low (SAST Triage) vs. Medium (others)

- Backup code race condition: Uncertain (Targeted Expert) vs. Medium (Broad Expert)

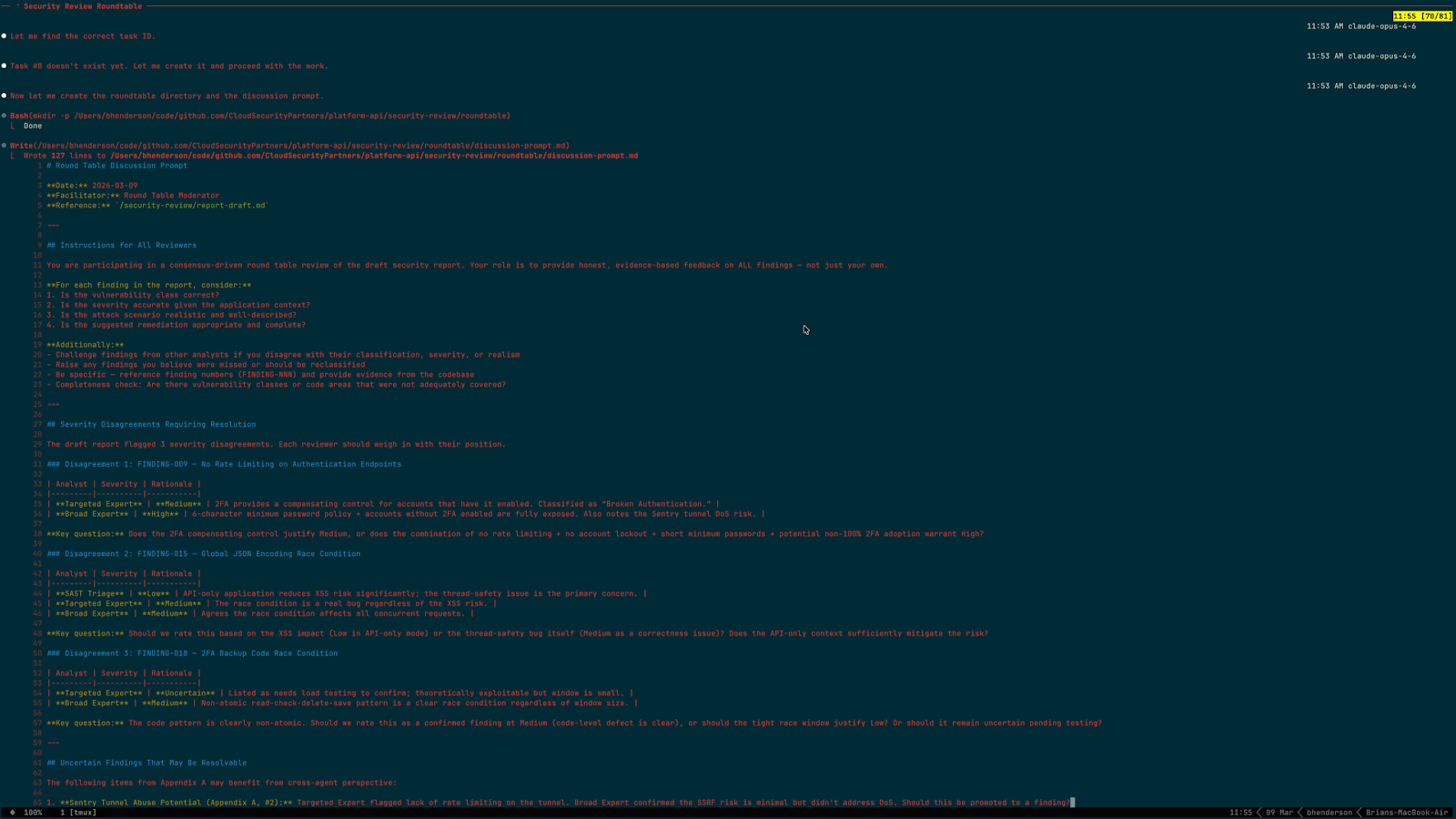

Phase 4: Round Table (~13 minutes)

This was the longest phase and the one that mattered most for report quality.

The Round Table Moderator wrote a 6KB discussion prompt framing the three severity disagreements, listing six uncertain items that might be resolvable with cross-agent perspective, and providing a completeness checklist. It then created feedback tasks for the four Phase 2 agents.

The most consequential challenge came from the SAST Triage agent, which argued that FINDING-008 (SSRF, rated High by the Targeted Expert) was a false positive. From its round table feedback:

This is a false positive in the current codebase. The download_sarif method in app/sidekiq/sarif_ingestion_job.rb:32-39 completely ignores the sarif_url parameter:

def download_sarif(sarif_url)

Rails.logger.info "Downloading SARIF from: #{sarif_url}"

begin

return File.read("test/fixtures/files/semgrep_example.sarif")

rescue StandardError => e

Rails.logger.error("Error downloading SARIF: #{e}")

end

end

The method accepts sarif_url as a parameter but reads a hardcoded local fixture file. The URL is only logged, never fetched. There is no SSRF in the current code. [...] Classifying it as High SSRF is inaccurate and would undermine report credibility with the client.

The Targeted Expert had originally built an elaborate AWS metadata attack scenario around this method without reading the 7-line body carefully enough to notice it was a development stub. In its round table feedback, it reversed position: "The current code is NOT vulnerable to SSRF. I verified app/sidekiq/sarif_ingestion_job.rb:32-39 [...] The sarif_url parameter is logged but never used for HTTP requests."

The rate limiting debate was the most substantive disagreement. The Targeted Expert had originally rated it Medium, citing 2FA as a compensating control. The SAST Triage agent challenged this directly: the require_two_factor! before_action blocks API usage if 2FA is not enabled, but it does not block the login endpoint itself. An attacker can brute-force credentials against POST /employees/sign_in regardless of 2FA status. The Targeted Expert then reversed position, adding a chaining argument that no other agent had raised: if any account is compromised via brute force, the attacker can read plaintext OTP secrets for all users from the default Employee serialization, cascading into 2FA bypass for every account.

The moderator synthesized all feedback and resolved the disagreements:

- SSRF (FINDING-008) moved from High → Low because two agents independently verified it's a dev stub

- Rate Limiting (RINDING-009) moved from Medium → High because Unanimous: three compounding missing controls

- FileUploads (FINDING-006) moved from High → Medium because endpoint returns 500, accidentally preventing exploitation

- Mass Assignment :role (FINDING-016) moved from Medium → Low because policy verification proved attack is impossible

- Backup Code Race (FINDING-018) moved from Uncertain → Low because confirmed code defect, but tight race window

- JSON Encoding Race (FINDING-015) stayed at Medium because 3-1 majority; Targeted Expert dissented

The final report was written to security-review/report-final.md at 44KB.

The Round Table Changed the Report

The round table wasn't ceremonial. The SSRF finding and the FINDING-016 privilege escalation (where the Broad Expert corrected its own finding after reading the SAST Triage agent's challenge, verifying that the Pundit policy made the described attack scenario impossible) would both have shipped in the final report without it. The consensus process caught errors that no individual agent would have caught in its own work.

Rough Edges

The execution wasn't flawless. The round table required manual orchestrator intervention to wake up idle agents that didn't immediately pick up their new feedback tasks. The team model's task notification isn't yet reliable enough for fully autonomous multi-phase coordination.

The findings themselves deserve scrutiny. The false positive rate from the SAST tools was high (the vast majority of semgrep's 57 findings were dismissed), though the triage agents handled it well by providing specific rationale for each dismissal. Some of the Medium and Low findings read more like hardening recommendations than exploitable vulnerabilities.

The expert agents' manual findings are harder to validate without a human reviewer confirming each one. To test this, we independently validated FINDING-002, the Critical about Employee model serialization. The report claimed the Employee model had no serializer and that all endpoints exposing Employee data leaked sensitive fields.

The reality was more nuanced. An EmployeeSerializer does exist, but it's in app/policies/ instead of the conventional app/serializers/ directory. We confirmed that AMS autoloads from all Rails autoload paths, so the EmployeesController endpoints are protected by the serializer the agents never found.

The finding is still legitimate. AccountController#show bypasses AMS by calling as_json directly with an incomplete exclusion list, and CustomersController#show includes employees without a CustomerSerializer, causing AMS to fall back to Rails default serialization. But the attack surface is narrower than the report claims: two of the three reported endpoint families are vulnerable, not all three. This is exactly the kind of correction that human review adds on top of the agent team's work.

What We'd Change

The execution exposed several improvements worth making before the next run.

Add a Verification Phase and Split the Round Table

The two biggest errors in the draft report shared a root cause: an agent classified a finding based on incomplete reading of the code. Both were caught by the round table, but they shouldn't have made it that far.

A short verification pass after Phase 2 would fix this: before the report writer starts, each expert re-reads one key code location per High or Critical finding and confirms the vulnerability is real. This adds minimal time and prevents the round table from spending its budget on factual corrections rather than genuine severity and completeness debates.

The expert prompts should also require that agents read the full implementation of every method in a finding's call chain before classifying it Medium or above. Read the body. Cite the relevant lines.

With factual errors caught earlier, the round table itself could split into two focused rounds: a short fact-checking pass (directed, evidence-based verification tasks), followed by severity and completeness discussion. The current single-round format conflates both, and agents spent time debating the severity of findings that turned out to be factually wrong.

Separate Exploitable Findings From Hardening Recommendations

The report lists 22 findings from Critical to Low, but several Low findings (Dockerfile running as root, CORS accepting a staging origin, the well-mitigated constantize pattern) are hardening recommendations, not exploitable vulnerabilities. Mixing them with findings that have concrete attack scenarios dilutes the report's urgency. The report structure should distinguish between "an attacker can do X today" and "if circumstances change, X could become possible."

Fix the Idle Agent Problem

The round table required manual orchestrator intervention to wake up Phase 2 agents for their feedback tasks. The skill should instruct the orchestrator to explicitly message each agent after round table tasks are created rather than relying on task notifications alone. The round table cannot function if agents don't participate, and manual intervention defeats the purpose of automation.

Conclusion

The agents handled the mechanical work well: running tools, triaging false positives, tracing code paths, and writing detailed findings. The expert agents found the two most important vulnerabilities — both business-logic issues that standard SAST tools are unlikely to catch. The round table caught errors that no individual agent would have caught alone, including the High-severity SSRF for a function that never makes HTTP requests. Splitting the expert role into targeted and broad reviewers, a design decision driven by the human during the brainstorming phase, directly produced both Critical findings.

The agents did not handle everything well. The Targeted Expert built a finding from a method signature without reading the body. The Broad Expert described an attack scenario that the authorization policy makes impossible. The report mixed exploitable vulnerabilities with hardening recommendations. The FINDING-002 spot-check showed the attack surface was narrower than claimed because the agents missed a serializer in an unconventional directory. The orchestration still needed manual nudges to keep agents participating in the round table.

The design process required constant expert human correction. Claude defaulted to simulating the round table, proposed new agents instead of resuming originals, and built the skill on subagent orchestration when the prompt asked for agent teams. Each default was reasonable and wrong. The structured design workflow from Superpowers kept the process disciplined (requirements before architecture, incremental testing before full runs), but the human still had to notice the drift and push back.

Is this a replacement for a human security reviewer? No. Is it a useful augmentation? Yes. The 31 minutes it takes to run is time a human reviewer can redirect from running tools and triaging false positives to validating the findings that actually need judgment. The process has clear room for improvement, but even in its current form, it surfaces real issues and argues about them before a human ever looks at the report.

About the Author

Brian Henderson is a Principal Engineer at Cloud Security Partners. He has dedicated his 20-year career to cybersecurity, gaining expertise across offensive and defensive security. He began with a focus on application and penetration testing before transitioning to securing cloud infrastructures for both startups and enterprises. In addition to his security expertise, Brian has recently worked as a software engineer, developing enterprise cloud security solutions, leading engineering teams, managing people, shaping product direction, and maintaining strong customer relationships. His broad experience allows him to bridge the gap between security, engineering, and business needs.

Outside of work, Brian enjoys spending time with his family, hiking, and playing board games.

Stay in the loop.

Subscribe for the latest in AI, Security, Cloud, and more—straight to your inbox.