Illustrated by Jeff Prymowicz

What We Mean by Skills

If you've spent any time in security, you know that many components of the job involve stitching together tools. You pull a log here, cross-reference a CVE there, run an indicator through threat intel, and eventually build a picture of what's happening. It can be tedious and repetitive.

That's where the idea of skills comes in. A skill, in the AI context, is a focused capability (or a discrete thing) that an AI system can do. Look up threat intel. Parse a log file. Enrich a CVE. Classify a URL as phishing or clean. Run a YARA rule against a sample. Each skill handles one job and does it well.

If you're a developer, think of skills the way you'd think of functions or microservices. They take an input, do something specific, and return an output. They're not full workflows; they're building blocks. What makes them interesting is that AI systems can call them dynamically, mixing and matching based on what a situation actually requires, rather than following a hardcoded sequence.

This is a meaningful departure from how we've traditionally automated security work. Scripts run fixed instructions. Playbooks follow predefined paths. Skills are more composable. You can chain them in ways you didn't anticipate when you first built them, and that flexibility turns out to matter a lot in real investigations, where things rarely unfold the way you expected.

A skills ecosystem is already taking shape. Some skills come from vendors and platforms you probably already use. Others come from open-source projects. And many of the most useful ones will be ones your team builds internally: the integrations with your specific stack, your internal data, your particular environment.

That last point matters for security reasons too. Skills often touch sensitive systems like your SIEM, your EDR, and internal APIs. A poorly designed or outright malicious third-party skill could quietly exfiltrate data, take unintended actions, or introduce hidden dependencies into production workflows.

Because skills are often invoked dynamically by AI systems, their behavior can be harder to audit than a traditional integration.

So treat third-party skills like any other piece of operational code. Review where it came from and who maintains it. Look at what data it touches and whether it makes external network calls. Check what permissions it needs and whether those permissions are actually justified. Test it in a non-production environment before you let it anywhere near live systems. Log invocations and outputs so you can actually audit what's happening. This stuff isn't glamorous, but it's the difference between skills being a useful capability and a new attack surface.

The underlying point is simple: skills are what make AI systems operationally useful. Without them, you have a very capable conversationalist. With them, you have something that can actually do work.

What We Mean by Agents

If skills are what an AI system can do, agents describe how those capabilities actually get used.

An agent is an AI-driven system that can plan, reason through a problem, and execute multi-step tasks by pulling on whatever skills it needs, in whatever order makes sense, to work a problem forward. It's not running a fixed playbook. It's figuring out the next step based on what it knows, doing it, observing what happened, and deciding where to go from there.

The operating loop is pretty intuitive: perceive, reason, act, observe, and repeat. An alert comes in. The agent gathers context. It takes an action, maybe running enrichment or pulling related logs. It looks at the results and decides what to do next. That loop continues until the task is resolved or it hits something that needs a human call.

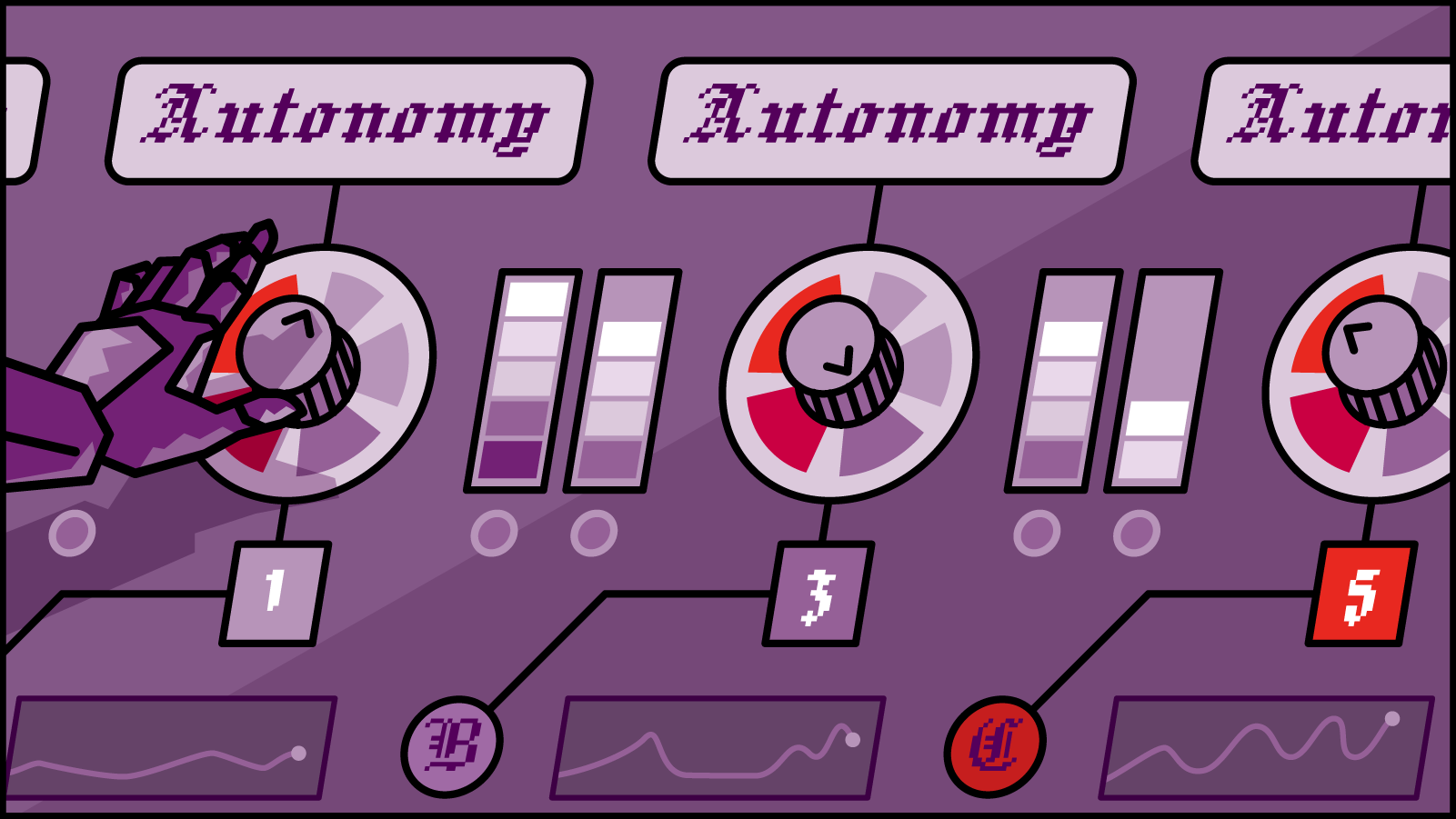

The autonomy dial can be set a lot of different ways. Some agents work closely with analysts, surfacing context, suggesting next steps, and doing the legwork while a human stays in control of decisions. Others run more independently on well-defined, routine tasks. Where you set that dial depends on how much you trust the system and how consequential the actions are. Enriching an alert is a lot lower stakes than initiating containment.

The use cases that are already showing up in production: triage agents that do initial alert enrichment and context gathering before a human ever looks at it; threat hunting agents that can work through telemetry looking for patterns or anomalies; incident response agents that help coordinate containment steps and evidence collection across tools.

The shift here is real.

AI isn't just something analysts query anymore. Agents can participate more directly in the work, running down leads, correlating data, and executing defined response steps at a pace that human teams simply can't match on volume.

Skills vs. Agents: A Useful Distinction

The simplest way I've found to keep these straight: skills are verbs, agents are roles.

A skill looks up a CVE. An agent is the thing that decides which CVE to look up, based on what it just found in a log, and then decides what to do with the result.

Skills are narrow and bounded. They do one thing and they do it reliably. Agents are stateful and adaptive. They're managing a process that unfolds over time, with multiple decisions and feedback loops along the way.

They work together, not in competition. Agents coordinate skills. A phishing classification function is a skill. A system that receives an alert, pulls relevant logs, enriches the indicators, classifies the threat, and surfaces a recommended response is acting like an agent. The agent is the orchestration layer; the skills are the actual capabilities it has to work with.

In practice, if you're deciding which model applies: if you're solving a single, well-defined task, a skill is usually the right fit. If you're managing a larger process with branching logic, dependencies, and results that feed back into further decisions, that's where agents come in.

Bringing This Into Your Security Workflow

The best entry points are the ones that are already painful.

Most security teams have a pretty clear mental list: the alert backlog that never shrinks, the repetitive enrichment work that eats analyst time, the slow-moving investigations that sit in queue for days, the manual handoffs between tools. Those friction points are where skills and agents tend to deliver the most obvious value, and they're where adoption tends to stick.

The path most teams follow looks something like this:

Augmentation first. Start with skills that assist analysts on focused tasks: enrichment, summarization, triage scoring. The humans are still in control; the AI is just doing the repetitive parts. This builds confidence and surfaces edge cases before you've automated anything consequential.

Then automation. As the system proves itself, you start letting agents handle routine, well-defined processes end-to-end. Alert triage. Ticket creation. Basic containment steps with clear criteria. You're still watching it, but you're not manually doing every step.

Then orchestration. This is where agents start coordinating other agents and tools across more complex, multi-domain investigations. This phase requires real trust in the system, solid governance, and a clear picture of where human judgment still needs to be in the loop.

On the integration side, these systems connect into the tools you're already using: SIEM, SOAR, EDR, ticketing systems, Slack, Teams. The technical integration is usually the more straightforward part. The harder part is the operational change.

When agents are handling alert triage, what does that mean for the analyst who used to do that work? What's the escalation path when the agent hits something it can't classify? Who's accountable when an automated response action turns out to be wrong? These are questions you want to answer before you're in an incident, not during one.

For measuring impact, most teams focus on mean time to detect and mean time to respond, but also watch for analyst capacity metrics: how much time is getting freed up for higher-value work, and whether false positive rates are actually improving over time.

Conclusion

Skills and agents aren't here to replace security professionals. If anything, the teams that will struggle are the ones that think replacement is the goal.

What these tools actually do is extend what a team can see, analyze, and act on. They handle the volume and the repetition. They make it possible for experienced analysts to focus on the things that actually require experience: judgment calls, novel threats, decisions that don't fit cleanly into a defined workflow.

The competitive reality is already becoming visible. Teams that learn how to build, integrate, and govern these capabilities are going to operate at a fundamentally different scale than teams that don't. That's not hype; it's just what happens when you can handle five times the alert volume with the same headcount.

But the challenge isn't just adopting AI. It's figuring out how to structure it within real security operations: where automation genuinely helps, where it introduces risk, and where human judgment stays essential. That balance is going to keep evolving as these systems get more capable and more embedded across the stack.

The security workforce of the near future won't be purely human or purely automated. The interesting work is figuring out how to make the collaboration actually function.

About the Author

Brian is a Principal Engineer at Cloud Security Partners. He has dedicated his 20-year career to cybersecurity, gaining expertise across offensive and defensive security. He began with a focus on application and penetration testing before transitioning to securing cloud infrastructures for both startups and enterprises. In addition to his security expertise, Brian has recently worked as a software engineer, developing enterprise cloud security solutions, leading engineering teams, managing people, shaping product direction, and maintaining strong customer relationships. His broad experience allows him to bridge the gap between security, engineering, and business needs.

Outside of work, Brian enjoys spending time with his family, hiking, and playing board games.

Stay in the loop.

Subscribe for the latest in AI, Security, Cloud, and more—straight to your inbox.